Weaving on a loom is fun. I gave my son a simple loom for Christmas, and he wove an entire scarf on Christmas Day. This got me thinking about building a computer controlled loom, maybe one that could weave any pattern imaginable. I liked the idea of taking software back to its very origins with Jacquard and the original Jacquard Loom. I also new there’d be lots of interesting design challenges.

Weaving on a loom is fun. I gave my son a simple loom for Christmas, and he wove an entire scarf on Christmas Day. This got me thinking about building a computer controlled loom, maybe one that could weave any pattern imaginable. I liked the idea of taking software back to its very origins with Jacquard and the original Jacquard Loom. I also new there’d be lots of interesting design challenges.

In mid December, TechShop had filed for bankruptcy. My annual Christmas Project was in full swing, and the loss forced me to “Cancel Christmas”. I was in shock. I’d been using TechShop tools/space for 10 years, and no longer having access to their lasers, Industrial Sewing Machines, etc was paralyzing. By Christmas day, I was starting to recover and was looking for a new project. Hopefully, one that could use the PCBs that I’d already designed for my canceled project.

I still had access to a 3D printer. Could I design a mostly-3D-printed loom that used the boards’ 3-stepper motor controllers and bluetooth LE? I’d give it a shot! The basic idea I had was for a 2-motor loom. One motor would sweep through a bunch of cams, and the other motor would set the position of each cam in turn.

I took the basic specs of my son’s loom as a good starting point for basic size/layout. His is a 10″ loom with 8 threads per inch, so each control section of the loom would have to be 1/8″ wide, and there would be 80 of these sections. An initial sketch of the mechanism indicated 7 parts per section, so the loom would have more that 560 moving parts that would all have to operate flawlessly time after time, row after row, 100s of times per piece of material. I told my friends up front that it seems impossible and that this thing was never going to work, but working on the design would be really fun and get me out of my post-TechShop project funk.

Now real weaving is an art that involves rhythm, consistency, and pattern. This would never be that. The time to update the cams would be too slow for any real rhythm, but there might still be some fun art and process to it, and stringing up the loom would be quick and easy in comparison to warping a traditional loom. I also had some ideas for programatic pattern generation. Wouldn’t it be neat to weave out something unexpected? Discovering it row by row? That sounded like fun to me.

A much faster system could be built with 80+ solenoids or something, but that didn’t really appeal to me. It would drive the cost way up and certainly wouldn’t take advantage of these circuit boards I had lying around.

The Big Idea

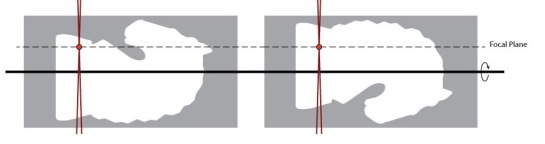

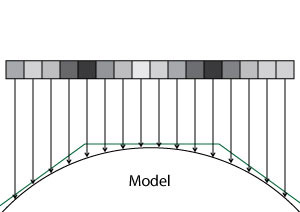

At the heart of the loom is a long row of cams sitting on a square shaft. In order to not have this giant row of cams jamming up horribly, the cams would have to have almost no load. The square shaft locks most of the cams in position but has one section that can rotate to spin a single cam. The main idea was to have the cams not do the lifting and lowering of threads directly, but to have them shift some hooks back and forth. If the hook was over a bar, then the bar could do the work of raising and lowering the threads.

At the heart of the loom is a long row of cams sitting on a square shaft. In order to not have this giant row of cams jamming up horribly, the cams would have to have almost no load. The square shaft locks most of the cams in position but has one section that can rotate to spin a single cam. The main idea was to have the cams not do the lifting and lowering of threads directly, but to have them shift some hooks back and forth. If the hook was over a bar, then the bar could do the work of raising and lowering the threads.

In order to be able to pull threads up or down, I use a second set of hooks and a teeter-totter arrangement. With a second set of bars and hooks, this also makes it very easy to invert the pulling up/down pattern, so an alternating weave requires no change in the cams or hook positions. It also avoids extra loads imposed by something like a per-thread return spring or weight and doubles the distance between the threads.

In order to be able to pull threads up or down, I use a second set of hooks and a teeter-totter arrangement. With a second set of bars and hooks, this also makes it very easy to invert the pulling up/down pattern, so an alternating weave requires no change in the cams or hook positions. It also avoids extra loads imposed by something like a per-thread return spring or weight and doubles the distance between the threads.

At first I worked on getting the carriage working. It had to slide back and forth smoothly with little backlash. I did some initial prototypes using just 1/4″ bronze bushings and flat end caps. These basically jammed all the time since the 3D printed flat plates could easily flex enough to take the bushings out of alignment. I changed the design to be much stiffer and to use one pair of linear bearings with a larger main shaft that does all the load bearing and a second 1/4″ shaft with no bearings at all that is just provides additional rigidity and alignment.

Would it work at all?

Even though the carriage movement back and forth was now working without jamming, the first time I tried to rotate a cam and seek to the next cam caused a horrible jam. The issue was that after driving the cam there’s still a lot of load from the cam on the square plate, so when you try to seek to the next position that load makes the cam really want to jam on any imperfection between the rotating square segment and the rest of the shaft, and those loads keep adding up as you rotate more cams. Thankfully this was easy to fix in software by overshooting a tiny bit and then returning so there was no longer any load from the cam before trying to seek to the next position. After fixing that, the system seemed to work. I did a 16-thread build and wove this strip of patterned material, then I built it out to 36 threads and wove these pandas.

Problems at scale

So after those successes, I built the loom out to 60 cams. Horrifyingly, at 60 cams I discovered an issue where the hooks really needed to be slightly thinner because the cumulate drag on each other kept adding up. It’s terrible to find issues that only manifest at 60 layers because it is not easy to iterate on a design if you have to reprint 120 hooks for each revision. This is why I started at 8 layers since I could print out 8 or 16 new parts fairly quickly. I probably went though something like 9 revisions on the cams adding features, adjusting things so that they could slide more smoothly long the square shaft, and adding some gaps in their contact ring so you can actually peek in and see where the cam mover is located. Trying to do that iteration with 120 parts is a whole different ball game since it can only print maybe 12 hooks a day it takes me close to 2 weeks of printing to change the design.

The other big issue when going up to 60 layers was that just using dead reckoning to position the cam mover exactly in each of the cams started to no longer work. Slight variations in cam thickness made it so just dividing the full range by 60 and assuming you would be in the right place stops working. I’m sure if these were identical injection-molded parts that might work at 60+ layers, but for my hand-crafted cams it stopped working. Everything was fine at 40, but when you go up to 60 it just starts falling apart. I ran into this issue on Christmas Eve after one year of working on the loom and had to give up on giving any 60-thread woven gifts for Christmas that year. Oops. Fail.

Adding cam position sensing

At this point, I really wanted a way to tell which position each of the cams is in, so on power up the loom would be able to scan though and check the physical state of the loom. Up until this point, I’d just been leaving the loom in a known state or manually adjusting the cams to that state, and that was a drag. Thankfully I’d put a IR gap sensor leg on each of the cam followers. When the cam was fully forward, the IR sensor would not be blocked, but when the cam was all the way back the IR sensor would be fully blocked. The IR gap sensor moved around with the cam driver, so it always knows about the current cam follower position. This made it easy to write some code to scan though all the cam positions and read the state of all the followers. This really reduced the startup time on the loom if I had left it in some random cam state on power off.

At this point, I really wanted a way to tell which position each of the cams is in, so on power up the loom would be able to scan though and check the physical state of the loom. Up until this point, I’d just been leaving the loom in a known state or manually adjusting the cams to that state, and that was a drag. Thankfully I’d put a IR gap sensor leg on each of the cam followers. When the cam was fully forward, the IR sensor would not be blocked, but when the cam was all the way back the IR sensor would be fully blocked. The IR gap sensor moved around with the cam driver, so it always knows about the current cam follower position. This made it easy to write some code to scan though all the cam positions and read the state of all the followers. This really reduced the startup time on the loom if I had left it in some random cam state on power off.

In order to view the IR sensor output directly, I added a way to poll it over bluetooth so I could see a kind of chart recorder output from the sensor. This made it easier to set the various thresholds and also see how much noise was in the signal.

How do you deal with positioning if the cams are not all identical?

At first, I thought I could scan for the cam positions using the IR sensor I’d added that detects cam positions.In theory that might work, but sadly because the cam followers are thin enough to not rub against one another and float a bit on the cams, there’s a lot of noise in the exact positions relative to the cams. So scanning their positions was not really the silver bullet I’d hoped for. Thankfully I had another idea. I could number all the cams then assemble the loom with JUST the cams. Then I used my phone to manually seek the positioner section to the center of every 10th cam. I wrote down those values and wrote some interpolation software that would compute cam positions interpolating between those values. This caps the interpolation error. It’s almost like having six 10-cam looms lined up. Then you just have to re-assemble the loom with the cams in the same order. This was somewhat annoying to do, but it totally worked, and I was back in business.

The hardest part? The On/Off switch.

After the loom was no longer jamming, I worked on redesigning the PCB and making an integrated enclosure. The problem was that the original board had been designed for something that was never going to be turned off, so it used 2 power supplies and had no support for an on/off switch. When I designed the board, I decided I wanted to simplify down to a single high current 19v laptop supply and used a buck converter to generate the 5v needed for the LEDs/electronics. However, my initial choice of using an ACT4088 was a bad plan. It didn’t have great application notes, and my initial stab at layout caused some sort of power on spike that was killing all the downstream electronics. I blew up a number of those chips and even contacted some folks online who’d used them in their projects only to find out they’d had troubles with them too, especially when operating above 12v. Clearly, I was trying to be too clever, and this was not working. I decided to make that piece of the design someone else’s problem by using a 3-pin switching regulator. I switched to the $3 VX7805-500 and got a whole new version of the PCB made. (V3 at this point)

After the loom was no longer jamming, I worked on redesigning the PCB and making an integrated enclosure. The problem was that the original board had been designed for something that was never going to be turned off, so it used 2 power supplies and had no support for an on/off switch. When I designed the board, I decided I wanted to simplify down to a single high current 19v laptop supply and used a buck converter to generate the 5v needed for the LEDs/electronics. However, my initial choice of using an ACT4088 was a bad plan. It didn’t have great application notes, and my initial stab at layout caused some sort of power on spike that was killing all the downstream electronics. I blew up a number of those chips and even contacted some folks online who’d used them in their projects only to find out they’d had troubles with them too, especially when operating above 12v. Clearly, I was trying to be too clever, and this was not working. I decided to make that piece of the design someone else’s problem by using a 3-pin switching regulator. I switched to the $3 VX7805-500 and got a whole new version of the PCB made. (V3 at this point)

This seemed to work, but eventually the board would die on power up. Some sort of power on transient that was still killing my boards dead. This is kind of the worst way to die since you think it’s working, fully populate the board, and think you’re done, then it eventually dies and takes $40 of other components with it. At this point I very nearly gave up, but then at the very end I decided to try again with a somewhat more expensive Traco Power TSR 1-2450 three-pin regulator. I also tacked in a 5.6 zener to try and add some protection to the 5v rail and soldered a 10uf filter cap across the BTLE daughter board since that was the only daughter board that didn’t have its own decoupling cap. This seems to have worked. At least, the most recent build of the board has survived a zillion turn ons now, so I’m going to declare it working. *knock wood*

Frankly, this version of the board is kind of crazy since it actually has the cam motor shaft passing through the board, and it uses a lot of space for a 3rd stepper motor controller that I never populate/don’t expect to populate after giving up on trying to calibrate in a second cam moving motor. So the board really should be shrunk down quite a bit, but it was fun making it fit the enclosure, etc.

One last try

I decided to warp the loom one last time and try once again to weave at 60 threads, and it finally worked without jamming. I could probably go a tiny bit higher on the thread count, but I punted on my original design plan of two cam movers since I was having enough trouble with getting one cam mover to not jam. This limited the range of travel/the total number of cams. It’s also good to have some free space on either side, so one can work in the guts of the loom if something goes wrong. I’ll probably stop at 60 instead of 80, since it’s slow enough as it is.

Video Mish-Mash

I deiced to video this project. However, I spent over a year making the loom and shooting video. During that time, I slowly got better at the video-making process, which means that the style and quality of the early videos is way worse than the later videos. This has made for a somewhat lumpy feeling for the final video. There are some sequences I’m very proud of, and others that I think are just horrible looking now. Oh well, it would be too much work to reshoot some of that stuff. It is what it is.

Where to now?

Well, I’m tempted to work on making the loom faster, but a year and a half is a long time to work on a project (for me). Most of my projects are < 6 months, so I may just move on. I would like to work on some error diffusion style weave dithering, maybe write some procedural weaving pattern stuff, but frankly I think all my friends, coworkers, and family have heard enough about weaving/looms to last a lifetime, so maybe I should work on something else.

I’ve open sourced all the designs and the code, but be warned these things are a dump of the design files/software as I made them. There are no instructions. It’s not the least bit cleaned up. There may not even be super obvious names/labels, so trying to build a functioning loom from those files would be pretty difficult. I was barley able to get the thing to go, and I’m fairly meticulous when building these kinds of things. I’m relieved it worked. Still, if you’re interested in this kind of build, it might give you some inspiration and a place to see what others have done. I’m kind of surprised there aren’t more of these things around. The ability to weave crazy designs is pretty awesome.

.

.

I did some more designs to make the probe fold up more tightly so it could fit in my pack of trouble-shooting widgets. I also ordered some cheap ball joints from China since it seemed like I’d be making more of these things. The probe was a HUGE win: Cheap, not that hard to build, makes the impossible possible. What else could I do with this idea?

I did some more designs to make the probe fold up more tightly so it could fit in my pack of trouble-shooting widgets. I also ordered some cheap ball joints from China since it seemed like I’d be making more of these things. The probe was a HUGE win: Cheap, not that hard to build, makes the impossible possible. What else could I do with this idea?

I downloaded the free version of

I downloaded the free version of

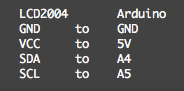

So I ordered one of the boards, and I was off to the races. Adafruit’s page about wiring up the board is very clear, and I hooked up an Arduino UNO just to try it out. I was able to get their UART echo communication going right away. Simple, Pimple.

So I ordered one of the boards, and I was off to the races. Adafruit’s page about wiring up the board is very clear, and I hooked up an Arduino UNO just to try it out. I was able to get their UART echo communication going right away. Simple, Pimple.